Mastering Object Detection: Training YOLO-NAS on Custom Datasets

Jun 22, 2023

What is YOLO-NAS?

You Only Look Once Neural Architecture Search (YOLO-NAS) is the latest state-of-the-art (SOTA) real-time object detection model. YOLO-NAS is a new improved version of the YOLO family for object detection. YOLO Model is being around the corner for a while, presented in 2015 with paper You Only Look Once. YOLOv8 was presented earlier this year in January 2023 by Ultralytics and now a company named Deci AI has released YOLO-NAS and surely it achieves great result with a very low latency and a best accuracy and latency tradeoff to date.

YOLO-NAS achieves a higher mAP value at lower latencies when evaluated on the COCO dataset and compared to its predecessors, YOLOv6 and YOLOv8.

Now we will dive deep into the details of how they are able to do it.

Neural Architecture Search

Neural Architecture Search is the meaning of shortcut NAS in YOLO-NAS.

Most of the times, model architectures are designed by human experts. Since there is a huge number of potential model architectures, it is likely that even if we reach great results, we did not nail it exactly on the best choice of model architectures out there and we could still find different model architectures that would yield better results. As a result, Neural Architecture Search was invented. Neural Architecture Search includes three components.

Search Space:

A search space defines the set of valid possible architectures to choose from.

Search Algorithm:

A search algorithm which oversees how to send possible architectures from the search engine space.

Evaluation Strategy:

Evaluation strategy is used to compare the candidate architectures.

To come up with model architecture for YOLO-NAS. Deci AI used their own Neural Architecture Search implementation called AutoNAC which stands for Automated Neural Architecture Construction.

AutoNAC was provided with all the details needed to search for the YOLO possible architecture which created an initial search space of huge size 10^14 and AutoNAC which is hardware aware found an optimal architecture for YOLO - NAS which is optimized for Nvidia T4, in a process that took 3800 hours of GPU.

YOLO-NAS is available as a part of super-gradients package maintained by Deci.

The following chart below shows the results of Deci’s benchmarks on the YOLO-NAS

Environment Setup

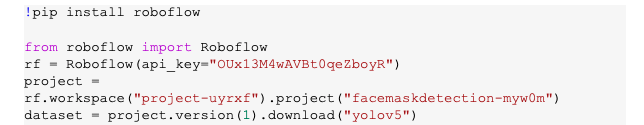

Before starting the training, we first need to prepare the Python environment. Let’s start by installing three pip packages. As YOLO-NAS is available as part of super-gradients package, so we will first install the super-gradients package, along with this we will install imutils, roboflow and pytube package. Roboflow package allows us to download the dataset from Roboflow Universe and visualize the results of our training respectively.

If you are using the Google Colab Notebook or Anaconda Jupyter Notebook, please restart the environment after installing of the above packages is completed.

Inference with YOLO-NAS Using Pre-trained COCO Model

Before we start training, we will first test inference using one of the pre-trained models and this will also familiarize us with the YOLO-NAS API.

Load YOLO-NAS Model

To perform inference using the pre-trained COCO model, we first need to choose the size of the model. YOLO-NAS comes with three different model sizes: yolo_nas_s, yolo_nas_m, yolo_nas_l

The yolo_nas_s, is the fastest and smallest but it is less accurate as compared to other YOLO-NAS models, the yolo_nas_l, is the largest and the most accurate among the other two YOLO-NAS models, but it is slowest/less fast. The yolo_nas_m offers a middle ground between the two.

YOLO-NAS Model Inference

The inference involves setting the confidence threshold and calling the predict and displaying the output image using the .show() function

Fine Tune YOLO-NAS with Open-source Datasets:

To fine-tune/train the YOLO-NAS model, we first need to gather the data and then we need to need convert the dataset in the YOLO format, so we can fine-tune/ train the YOLO-NAS model on the dataset. For this I will be using the Face Mask dataset available on roboflow, we will export this dataset from roboflow in the google colab notebook.

Select Hyperparameter Values

To train the YOLO-NAS model, we will set several key parameters. First, we will set the model size. There are three options available: small, medium and large. The large model will take more time to train as compared to the small model, so if you have limited RAM available then you can consider using YOLO-NAS small model.

Next, we will set the batch size. This parameter dictates how many images will pass through the neural network during each iteration of the training process. A larger batch size will speed up the training process but will also require more memory.

Before we start training, we need to define the number of epochs for the training process. This is essentially the number of times the entire dataset will pass through the neural network.

Train a Custom YOLO-NAS Model:

The process of training YOLO-NAS model is more verbose than YOLOv8. Many features in the Ultralytics model require passing a parameter in the CLI, whereas, in the case of YOLO-NAS. It requires custom logic to be written.

Evaluating the Custom YOLO-NAS Model:

After training, we will evaluate the model’s performance using the test method provided by the Trainer. We pass the test data loader and trainer return a list of metrics including Mean Average Precision (mAP) which is commonly used for evaluating object detection models.

Inference on Image

We will do inferences on random images and videos and visualize the results to see how the trained/ fine-tune model performs on individual examples.

Now in the above example, we can see that our trained/ fine-tune model is able to detect the mask. Our face mask dataset consists of three classes i.e. with mask, without mask and mask worn incorrectly.

Conclusion

YOLO-NAS is a new option, when it comes to real time object detection. YOLO-NAS achieves a higher mAP value at lower latencies when evaluated on the COCO dataset and compared to its predecessors, YOLOv6 and YOLOv8.

Ready to up your computer vision game? Are you ready to harness the power of YOLO-NAS in your projects? Don't miss out on our upcoming YOLOv8 course, where we'll show you how to easily switch the model to YOLO-NAS using our Modular AS-One library. The course will also incorporate training so that you can maximize the benefits of this groundbreaking model. Sign up HERE to get notified when the course is available: https://www.augmentedstartups.com/YOLO+SignUp. Don't miss this opportunity to stay ahead of the curve and elevate your object detection skills! We are planning on launching this within weeks, instead of months because of AS-One, so get ready to elevate your skills and stay ahead of the curve!

FAQs

Yes, YOLO-NAS can be trained with custom datasets containing objects of various classes. Ensure that the dataset is properly annotated and covers the objects you want to detect.

The training time can vary depending on factors such as the size of the dataset, complexity of the objects, hardware resources, and the selected hyperparameters. It can range from a few hours to several days.

Yes, fine-tuning a pre-trained YOLO-NAS model on a custom dataset is a common practice. It can help improve the model's performance and reduce the training time required.

Yes, YOLO-NAS is designed to perform real-time object detection. Its efficiency and accuracy make it suitable for applications that require fast and reliable detection in real-world environments.

You can refer to academic papers, online tutorials, and official documentation of YOLO-NAS frameworks for in-depth understanding. Additionally, various communities and forums dedicated to computer vision and deep learning can provide valuable insights and guidance.

From 80-Hour Weeks to 4-Hour Workflows

Get my Corporate Automation Starter Pack and discover how I automated my way from burnout to freedom. Includes the AI maturity audit + ready-to-deploy n8n workflows that save hours every day.

We hate SPAM. We will never sell your information, for any reason.