Heart Rate Detection Using Eulerian Video Magnification & YOLOR

Mar 03, 2022

The human visual system with all its sophistication has limited spatio-temporal sensitivity. The problem is not that we can only see a very limited range in the spectrum of light, but that there exist a plethora of changes and motions; that are too quick, or too small for our naked eyes to discern. What this means is that there is a world of motion that is too subtle for the human eye, motion that can be seen by a regular camera. Let’s better understand what this means with the help of some examples.

In the GIF above, there is a video of a person’s wrist and a video of a sleeping baby. Now, if you weren’t explicitly told that this was a GIF you might assume that this was a simple image. And no one would blame you, because in both of these cases; the video appears to be almost completely still.

However, there’s some subtle motion going on in both of them. Say, if you were to touch the wrist of the person in the video you would be able to feel a pulse. And similarly, if you were to hold the baby you would be able to feel the subtle rise and fall of the chest with every breath. The issue is that these motions are too subtle for us to see, so instead, we have to observe them through direct contact, through touch.

Hao-Yu Wu, Michael Rubinstein, et al, created a Motion Microscope that finds these subtle motions in videos and amplifies them so that they become obvious enough for us to see. It doesn’t use optics like a regular microscope to make small objects bigger, but instead, it uses image processing to reveal the tiniest motions and color changes.

Amplified wrist video

If we use this technique on the left video, it enables us to see the pulse in the wrist, and if we were to count that pulse, we could even figure out this person’s heart rate.

Amplified video of a breathing child

And if we use it on the right video, it makes the breathing motions of the chest more obvious. A bit offputting perhaps, but it definitely makes it easier to tell the child is in fact still alive and breathing.

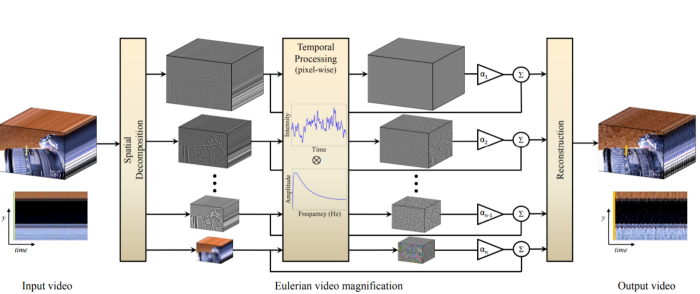

Eulerian Video Magnification

The basis of Eulerian Magnification is to consider the historical record of color values at any spatial location,i.e., pixel, and amplify variations in a given temporal frequency band of interest. Simply put, the algorithm amplifies changes that happen within a particular range of frequencies. These changes can be in the form of a small movement, or in the form of slight color changes.

Without going too deep into the math, the general intuition behind the method is that changes are more obvious when they are bigger, and if we know that subtle changes happen with a known frequency we can increase their amplitude to make them more obvious.

For example, there is a range of possible human heart rates, and although it is not easily apparent to us, the constant inflow-outflow of blood changes the color of the face. Think blushing, but infinitesimally smaller in amplitude. So if we can perceive this slight change in color, we can effectively find the heart rate of an individual.

The frequency of the human heart is rather low. (What is 60 to 100 beats per minute compared to about 250 to 6,000 Hz of average sound waves?) So we select a band of temporal frequencies that includes plausible human heart rates. When this temporal filtering is applied to lower spatial frequencies, it enables subtle input signals to rise above the camera sensor and quantization noise.

Notice the subtle movement with the change of color? Well, it turns out our heads move slightly with every wave of fresh blood that our heart pumps.

Just take a look at this demonstration, it uses a toy model with a transparent mannequin head, where rubber tubes stand for simplified arteries. And instead of pumping blood, we pump compressed air. Watch what happens as the valve is opened and closed once a second, similar to a normal heart rate. This motion is fairly similar to the exaggerated motion of real heads that we saw before.

Using either of these rhythmic (small) movements we can create an algorithm that detects the pulse rate of an individual without any contact whatsoever.

The applications of this motion microscope go beyond just detecting and counting rhythmic motion, it can be used to reconstruct sound waves based on how a curtain or the leaves of a plant move when subjected to the said sound wave.

Don’t get it? Imagine this scenario: You’re a spy looking through a window at two suspects who are having a heated argument but all the windows are closed so you can’t make out what they are saying. Using a camera, you can record something well-lit and light like a house plant, piece of paper, or a plastic bag and then use that recording to generate the sound for the argument. Very cool, isn’t it?

We implement Eulerian Video Magnification and use it to detect the heart rate in our YOLOR course. Not only that, we create a bunch of interesting applications like BlackJack card counting, weed detection, and even implement object tracking. Enroll in our YOLOR course HERE today!

From 80-Hour Weeks to 4-Hour Workflows

Get my Corporate Automation Starter Pack and discover how I automated my way from burnout to freedom. Includes the AI maturity audit + ready-to-deploy n8n workflows that save hours every day.

We hate SPAM. We will never sell your information, for any reason.