Computer Vision In The Metaverse

Mar 02, 2022

With Facebook’s rebrand to Meta and multibillion-dollar valuations of metaverse-centric crypto projects like MANA, SAND, and GALA, it’s safe to say that the idea of metaverse has seen a resurgence in interest. As a result, plenty of people have made half-decent definitions, but most don’t appreciate the digital world that is already looming over us. This article explores just how “meta” our high-tech world already is, and why machine learning, specifically computer vision, will be key as the metaverse expands.

But What Is This ‘Metaverse’ You Speak Of?

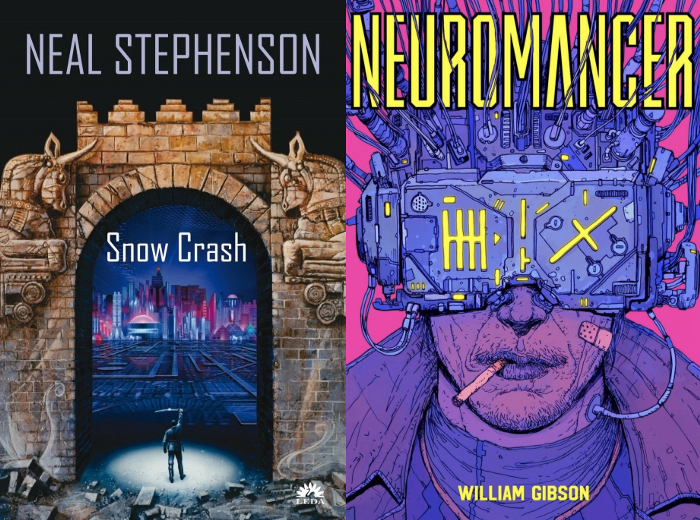

Although the term meta-verse was coined by Sci-fi novelist Neal Stephenson in his 1992 novel “Snow Crash” to describe the virtual world in which the protagonist (Hiro Protagonist) fraternizes and conquers real-world enemies through his avatar. The notion predates “Snow Crash” and was popularized as “cyberspace” in William Gibson’s disruptive1984 novel “Neuromancer.”

Simply put, the term ‘metaverse’ is a blanket term for the digital worlds that are meant to augment or extend the real world. This could be something really complex like going to a virtual concert and listening to music created by virtual artists, or it could be something simple, perhaps something you’re doing right now. What if I told you you’re in a metaverse right now, or at least you’re interacting with one. Isn’t this text on your screen a replacement for what would have been a magazine just a decade ago? Isn’t this blog effectively a part of the Web2 metaverse with a low degree of immersion? Screens not only replaced paper, but they have also improved upon paper’s limitation of requiring an external source of light.

Matthew Ball’s quasi-manifesto lays out some very helpful criteria when thinking about the metaverse.

The most common conceptions of the Metaverse stem from science fiction. Here, the Metaverse is typically portrayed as a sort of digital “jacked-in” internet — a manifestation of actual reality, but one based in a virtual (often theme park-like) world, such those portrayed in Ready Player One and The Matrix. And while these sorts of experiences are likely to be an aspect of the Metaverse, this conception is limited in the same way movies like Tron portrayed the Internet as a literal digital “information superhighway” of bits.

Where Does Computer Vision Come In?

There are three key elements of an ideal metaverse we need to optimize for interoperability, standardization, and perception/interface.

The degree of interoperability dictates how seamlessly virtual assets like avatars and digital items can travel between virtual spaces. Right now most virtual assets are limited to the platform, metaverse, they are created on. For instance, a CSGO player cannot just port their skins over to another game that has the same exact guns, nor can a GTAV online player use their character (that they’ve probably spent years designing and tweaking) in another game.

ReadyPlayerMe Avatar Customization Screen

ReadyPlayerMe enables people to create an avatar that they can use in hundreds of different virtual worlds, including in Zoom calls. Meanwhile, blockchain constructs such as cryptocurrencies and non-fungible tokens(NFTs) help facilitate the transfer of digital goods across virtual borders(protocols).

Standardization is what enables the interoperability of platforms and services across the metaverse. As with all mass-media technologies — from the printing press to texting — common technological standards are essential for widespread adoption. On a hardware level, we seem to be converging towards a singular Thunderbolt-enabled USB-C port for all devices. On a networking level, we have already decided upon standard protocols for different tasks.

The Protocols Behind E-Mails

The Protocols Behind E-Mails

For example, most mail clients work on SMTP, IMAP, and POP3, this is why you can send mails from your G-Mail account to your friend that uses another client like ProtonMail. Just imagine how divided and tedious mails would be if this wasn’t the case. Organizations such as the Open Metaverse Interoperability Group are working towards defining these standards.

Finally, perception and interface. They dictate how it feels to be in the virtual space, how you interact with the virtual space, and other virtual avatars. From the end user’s perspective, these are arguably the most important aspects of the Metaverse. There’s an abundance of research that shows that a sense of embodiment helps elevate the quality of online interactions. Even when you think about it intuitively, why do we prefer video calls over voice calls? Because we feel more immersed in the experience, because it’s closer to our normal perception of reality. This is where machine learning comes in.

Even beyond the limits of the human body, machine learning depends on real people to make sense of the world. The construction of the metaverse is not exempt from the irony of the information age: in order for humans to rely on machines, machines need humans to first teach them.

Reality scanning & integration of real-world objects into the metaverse

Let’s say we want to create a digital copy of an existing entity like a grocery store that people can walk through and interact with. To achieve this someone has to teach the model where the cereal aisle is, how to pick up a loaf of bread, or open a refrigerator door. On top of that, we’ll need to incorporate a bunch of vision models that generate the boxes of cereals, the refrigerator door, and models that segment surfaces and decide how light bounces off of differently from the different surfaces like the glass of the fridge door and the frame surrounding it.

Oculus Visual Tracking Controls

And if we don’t want to wear icky gloves or controllers we need to train robust gesture recognition models that enable the user to interact naturally with the metaverse.

So, are you excited about the metaverse? Do you want to create a metaverse application? If you’re reading this, chances are you’re already somewhat proficient in artificial intelligence & computer vision. Why not use that as your inroad into a career in the metaverse? Check out our computer vision courses HERE. We have a wide range of courses that cover not only state-of-the-art models like YOLOR, YOLOX, Siam Mask, but also have guided projects such as pose/gesture detection and creating your very own smart glasses.

www.augmentedstartups.com/store

From 80-Hour Weeks to 4-Hour Workflows

Get my Corporate Automation Starter Pack and discover how I automated my way from burnout to freedom. Includes the AI maturity audit + ready-to-deploy n8n workflows that save hours every day.

We hate SPAM. We will never sell your information, for any reason.